Airship Update - December 2020

What follows is the Airship Community Update for December 2020, brought to you by the Airship Technical Committee.

AIRSHIP BLOG

The Airship blog is a great way to keep up with what's going on in the community. The Airship community publishes blog posts regularly, including the Airship 2.0 Blog series. These blog posts introduce the changes from Airship 1.0 to Airship 2.0, highlight new features, and detail each component's evolution. The recommended reading order for these blogs is listed below. It begins with the lessons learned on the road to Alpha, to address the changes made from the original blog series 1-6.

- Airship 2.0 is Alpha - Lessons Learned

- Pre-Alpha Airship Blog Series 1 - Evolution Towards 2.0

- Pre-Alpha Airship Blog Series 2 - An Educated Evolution

- Pre-Alpha Airship Blog Series 3 - Airship 2.0 Architecture High Level

- Pre-Alpha Airship Blog Series 4 - Shipyard - an Evolution of the Front Door

- Pre-Alpha Airship Blog Series 5 - Drydock and Its Relationship to Cluster API

- Pre-Alpha Airship Blog Series 6 - Armada Growing Pains

Stay tuned for upcoming blog posts as Airship 2.0 progresses through release!

AIRSHIP CONTAINER AS A SERVICE

Two operators will manage the lifecycle of Virtual Machines and their relationship to the cluster: vNode-Operator (ViNO) and the Support Infra Provider (SIP). These operators are new projects to the Airship ecosystem and were migrated to OpenDev earlier this month.

Now, you may be asking why are we creating two more projects? It's a fair question, so let's discuss the motivation behind these projects. These projects focus on a particular user of Airship, those that want to support multi-tenancy.

Airship was created as a solution to managing Kubernetes workloads, and over time a lot of these workloads have become Kubernetes native. Some of those workloads, however, have needs that exceed those of individual Kubernetes clusters, which limits hard multi-tenancy. An example of this would be a Custom Resource Definition (CRD), a cluster-wide object that does not facilitate namespacing or other ways of segregating it. This can lead to issues if one cluster wishes to consume a particular version of Istio, but that version of Istio would conflict in a different cluster.

So we need a way to have multiple Kubernetes clusters, preferably in a single hardware region. We examined several ways to segregate these out, including:

- Splitting up a datacenter into multiple Airship managed regions

- Deploying infrastructure using tools like OpenStack or Kubevirt (essentially enabling a single large undercloud cluster, like in Airship 1, to provision multiple clusters above it)

We felt that encapsulating smaller clusters over a cluster directly deployed on bare-metal was the right decision, supporting aggregating hardware to perform common Ceph service consumed above, and a more granular lifecycle control of clusters operating tenant workloads. The question becomes, how do we split the bare-metal nodes up into consumable units for Cluster API (CAPI) to provision and participate in multiple Kubernetes clusters?

Tools like Kubevirt were examined, but we felt that due to the specialized nature of a lot of the workloads we run, the benefits were outweighed by the issues encountered to enable low-level tweaking of how Virtual Machines operate, leading to a significant amount of abstraction.

It became apparent that a simpler, cruder, deployment would make more sense for us. New operators, SIP and ViNO were conceived as a solution.

So what do each of these operators do, at a thousand-foot view?

ViNO (Virtual Node Operator)

- Lays down libvirt and Virtual Machine definitions across bare-metal hosts

- Fronts the Virtual Machines with sushy-tools for Redfish interactions via Metal3 et al.

- Generates BareMetalHost definitions for the Virtual Machines

SIP (Support Infrastructure Provider)

- Creates a load balancer, essentially an HAProxy instance deployed across the nodes allowing kubeadm to instantiate a highly available cluster.

- Provides utilities such as a jump pod

- Facilitates CAPI (Cluster API) scheduling, e.g. "I need VMs with certain characteristics"

These projects are related to each other through CAPI, which will provision via Metal3 against the VMs created by ViNO and labeled by SIP.

Look forward to more information on both of these projects in an upcoming blog post by Pete Birley, expected in January. In the meantime, learn more about these projects which were both introduced at the PTG in October through the recording below:

- Recording (Starts at about 3hr27m into the recording)

- Password: ptg2020!

GITHUB LABELS UPDATES

As Airship's design has evolved, so too has the need for an evolution of our issue tracking. Historically Airship 2.0 development issues were labeled with a "component/xxx" label, but those have become stale. In an attempt to identify outstanding work and priority, we are dropping the component labels in favor of new categories, in order of importance, for all remaining unassigned issues:

| LABEL | DESCRIPTION |

|---|---|

| 1-Core | Relates to airshipctl core components (i.e. go code) |

| 2-Manifests | Relates to manifest/document set related issues |

| 3-Container | Relates to plugin related issues |

| 4-Gating | Relates to issues with Zuul & gating |

| 5-Documentation | Improvement or additions to documentation |

| 6-upstream/project | Requires a change to an external project, e.g. clusterctl |

| 7-NiceToHave | Relates to issues of lower priority that are not part of critical functionality or have work arounds |

AIRSHIP USER SURVEY

Are you evaluating Airship or using Airship in production? We want to learn from your experience! Take the Airship User Survey; the Working and Technical Committees review each response and helps us grow and mature Airship. Take the Airship User Survey today to be included in the next round of analysis.

AIRSHIP 2.0 PROGRESS

The progress shown below is for airshipctl, the new Airship 2.0 client.

Last month, airshipctl saw the following activity:

- 15 authors

- 31 commits

- 281 files changed

- 11,430 additions

- 817 deletions

- 11 closed issues

- 19 new issues

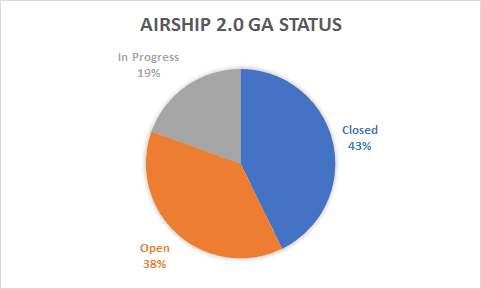

This activity is contributing to the General Availability (GA) milestone. Below is the overall status of the GA milestone, which does not include the breakdown of already completed Alpha and Beta milestones:

GET INVOLVED

This page lists everything you need to know to get involved and start contributing.

Alexander Hughes, on behalf of the Airship Technical Committee